INTRODUCTION

Over the past few years, the high-end RAID adapter market was basically owned by LSI. With the LSI MegaRAID SAS 9265/66, LSI crushed the competition in almost every measurable benchmark. The LSISAS2208 dual-core RAID-on-Chip (ROC) was spectacular. It provided excellent sequential throughput and even maxed out the x8 PCIe GEN2 interface in certain benchmarks. LSI had a death grip on the market and many insiders were unsure whether other companies could pose a serious threat.

Adaptec, during that time, was going through some growing pains. In 2010, they were acquired by PMC-Sierra, which looked great for them in the long-term, but it hampered products in the short-term. Their Series 6 RAID adapters were serviceable, but they could not compete with LSI’s offerings.

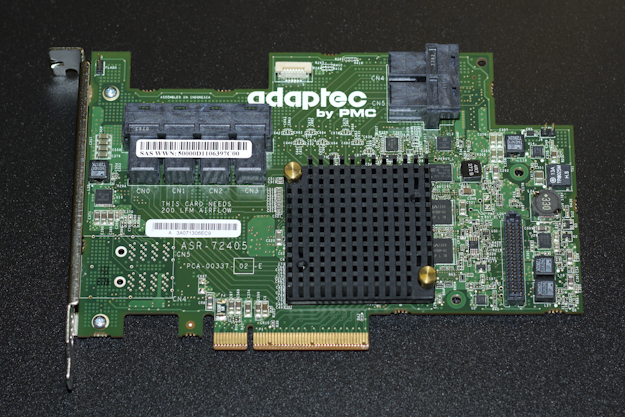

In October of 2012, the new Adaptec (by PMC) started to bear the fruits of that acquisition. With the release of the Series 7 RAID adapters, the first ground-up effort since the acquisition, Adaptec was finally ready to take on LSI.

The 7 Series utilizes PMC’s PM8015 RAID-on-Chip (ROC), which combines a x8 PCIe GEN3 interface with 6Gb/s SATA/SAS connections. They are offered in 8, 16 and 24 port configurations.

This is where it gets interesting. With most RAID adapters, you only see 4 or 8 native ports. Even when you look at cards where you can directly attach 16 or 24 drives, there is actually an expander that sits between the drives and the ROC. If you aren’t familiar with expanders, think of them as a network switch, where a few upstream ports can connect to multiple downstream ports. They are great for extending connectivity, but they have a few drawbacks. The first is that the aggregate bandwidth of all of the downstream ports are limited to the bandwidth of the upstream ports. The second is that they introduce latency. In the past, HDDs were never fast enough to expose these drawbacks. With SSDs, things have changed considerably.

With the PM8015 ROC, all ports are native to the ROC; no expander required. When you compare the theoretical bandwidth of the ASR-72405 against competing RAID adapters, the advantage is clear. The ASR-72405 has 18GB/s (24 ports x 6Gbps) of theoretical bandwidth, while competing RAID adapters only have only 6GB/s (8 x 6Gbps). It’s just that simple; 3x the number of ports equals 3x the bandwidth.

Now, that is all theoretical. When you take into account 8b/10b encoding, those numbers drop to 14.4GB/s and 4.8GB/s, respectively. We know that the x8 PCIe GEN3 interface is limited to 8GB/s, so we won’t be hitting 14.4GB/s any time soon (x16 GEN3? GEN4?). We also don’t know if the PMC ROC can even handle that much data at once. Lets take a look at what Adaptec does say about performance.

Unlike SSDs, RAID cards almost never have hard performance numbers in their datasheet. Adaptec is no different, but they do have performance whitepapers to give us some direction. In RAID 0, expect at least 6400MB/s for 1MB sequential reads and 5200MB/s for sequential writes. The same configuration yields over 500,000 random 4KB read IOPS and almost 375,000 write IOPS. With RAID 5, read operations match that of RAID 0, while writes drop to 2.6GB/s for sequential operations. These numbers are what Adaptec observed and may be dependent on the SSD being used.

When you first look at the Adaptec 7 Series RAID adapters, you will notice something is a little off. Instead of seeing the venerable SFF-8087 (mini-SAS) connectors you are greeted by SFF-8643 (mini-SAS HD, iPASS+ HD) connectors. The new high-density connectors and cabling allows Adaptec to fit 16 ports in a low-profile, half-length PCIe form factor (LP MD2). For enterprise customers that is huge improvement since most PCIe expansion slots in servers are LP MD2.

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

In many published reports a single optimus cannot provide latency performance within that tight of a range. These are obviously system cache results.

All caching was disabled, except for any write coalescing that the ROC was doing behind the scenes. You have to remember that the SSDs were not the bottleneck on the latency measurements, in fact, they were only going at 40% of their specified rates. Also, every test we have performed, and other sites as well, show the Optimus to be a very stable SSD. So, to your point, there is some amount of caching happening outside of the DRAM, but it very limited.

Any chance of reviewing the 71605Q, see how it stands up with 1 or 2TB worth of SSD cache and a much larger spinning array? Since it comes with the ZMCP (Adaptec’s version of BBU) you can even try it with write caching on.

I’d especially love to see maxCache 3.0 go head to head with LSI CacheCade Pro 2.0

Considering I actually already own the 71605Q, and bought practically “sight unseen” as there are still no reviews available it is nice to see that the numbers on the other cards in the line are living up to their claims.

Like I said in the review, I wish we had the time and resources to test out all combination, but we can’t get them all. I have both the 8 and 24-port versions and, yes, they always hit or exceed their published specifications. I agree, that would be a great head-to-head matchup, We have a lot of great RAID stories coming up, maybe we can fit it in. Thanks for the feedback!

Yeah after I posted that I started brainstorming all the possible valid combinations you could test with those two cards and there’s quite a few permutations… Also might not be too fair to the older LSI solution but it’s what they have available and I don’t know of any release schedule for CC 3.0 or next gen cards, so might not hurt to wait for those.

I guess the best case to test would be best case cache worst case spinners, so RAID-10/1E SSDs with RAID-6 HDDs. See how the two solutions do at overcoming some of the RAID-6 drawbacks esp the write penalty.

I’m guessing the results would probably be fairly similar to the LSI Nytro review but still would be interesting to see how up to 2TB of SMART Optimus would do with a 20TB array.

Nice! How did you manage to connect the two cards (X2)?

“..We were able to procure a second ASR-72405 and split the drives evenly across the two…”

See my comment

I’m assuming they made a stripe on each raid card and then did a software raid 0 stripe of the arrays into one volume. That would be why the processors showed 50% until under load.

Great review though that SSD has me worried for sustained enterprise usage.